Paper Reading: Objective Robustness in Deep RL (2022)

by: Jack Koch, Lauro Langosco, Jacob Pfau, James Le, Lee Sharkey

I came across this paper via a YouTube video on AI/ML Alignment. Issues of alignment asks the questions of whether or not an AI actually models the intended outcomes desired. Sometimes you don’t even have the right edge cases to know whether or not a wrong thing has been learned or represented. In a previous post, I wrote about explainability. There’s also an adjacent concept called interpretability. I grabbed the definitions below from kdnuggets.com.

The difference between machine learning explainability and interpretability

In the context of machine learning and artificial intelligence, explainability and interpretability are often used interchangeably. While they are very closely related, it’s worth unpicking the differences, if only to see how complicated things can get once you start digging deeper into machine learning systems.

Interpretability is about the extent to which a cause and effect can be observed within a system. Or, to put it another way, it is the extent to which you are able to predict what is going to happen, given a change in input or algorithmic parameters. It’s being able to look at an algorithm and go yep, I can see what’s happening here.

Explainability, meanwhile, is the extent to which the internal mechanics of a machine or deep learning system can be explained in human terms. It’s easy to miss the subtle difference with interpretability, but consider it like this: interpretability is about being able to discern the mechanics without necessarily knowing why. Explainability is being able to quite literally explain what is happening.

Think of it this way: say you’re doing a science experiment at school. The experiment might be interpretable insofar as you can see what you’re doing, but it is only really explainable once you dig into the chemistry behind what you can see happening.

Abstract

We study objective robustness failures, a type of out-of-distribution robustness failure in reinforcement learning (RL). Objective robustness failures occur when an RL agent retains its capabilities out-of-distribution yet pursues the wrong objective. This kind of failure presents different risks than the robustness problems usually considered in the literature, since it involves agents that leverage their capabilities to pursue the wrong objective rather than simply failing to do anything useful. We provide the first explicit empirical demonstrations of objective robustness failures and present a partial characterization of its causes.

Introduction

While capability robustness failures are concerning, objective robustness failures are potentially more dangerous, since an agent that capably pursues an incorrect objective can leverage its capabilities to visit arbitrarily bad states. In contrast, the only risks from capability robustness failure are those of accidents due to its incompetence.

We highlight the class of objective robustness failures, differentiate it from other robustness problems, and discuss why it is important to address (Section 2).

We demonstrate that objective robustness failures occur in practice by training deep RL agents on the Procgen benchmark [27], a set of diverse procedurally generated environments designed to induce robust generalization, and deploying them on slightly modified environments (Section 3).

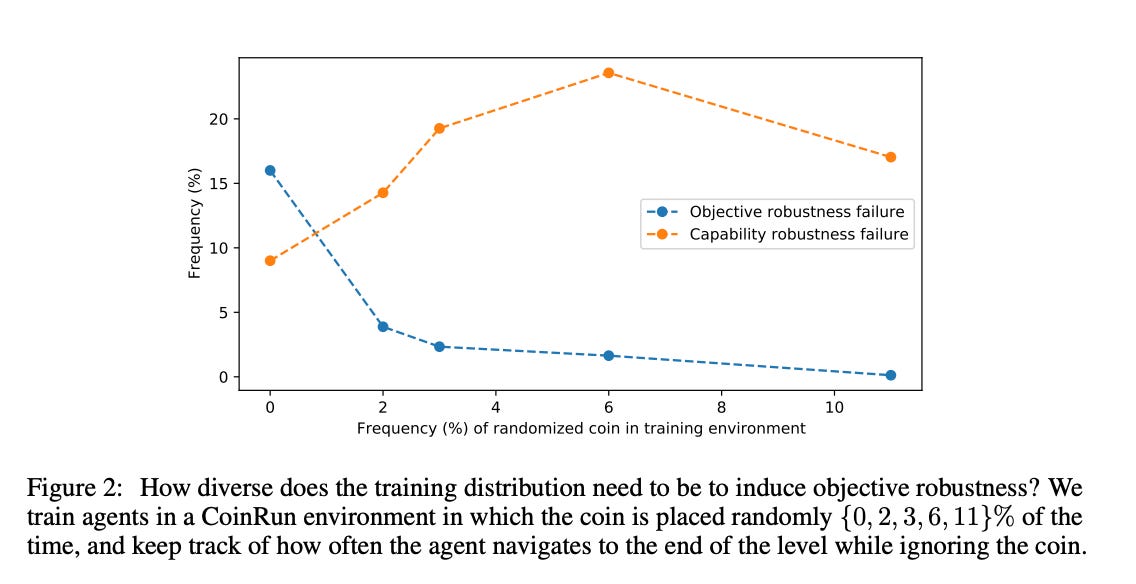

We show that objective robustness failures may be alleviated by increasing the diversity of the training distribution so that the agent may learn to distinguish the reward from potential proxies for it (Section 3.1.1)